Learning About Letters (and being terrified of the letter “V”)

I’m pretty sure I’ve blogged about this before, but we had this on VHS when I was a kid and it’s still in my head somewhere.

Comments:

- The hair on that lilac Muppet at 1:51 always made me super happy. It looks like such a nice texture.

- “‘C’ is for Cookie” is a banger.

- The deadpan delivery of “Harry the Horse” at 9:05 is great.

- In Bert’s defense, “linoleum” is a pretty awesome word.

- That story for “V” (19:50) terrified me as a kid. I’d leave the room every time, haha.

- That segment from 21:09-22:38 was always one of my favorite parts.

Woo!

Sign

The only thing I really know in ASL, apart from about 5 signs, is the alphabet. This app is a cool way to practice recognizing the letter signs in a QWERTY layout, even if you don’t need to send any sign language messages. It’s also got a few word signs as well.

I’d really like to learn ASL to the point where I could have a conversation with someone using it (at least, a conversation in which I wouldn’t have to look ridiculous spelling out every single word). I CAN spell relatively quickly, though; I think that comes from the fact that when I’m walking and listening to music, I like to try and sign the first letter of every word in the song as it plays.

Because what else am I supposed to be doing with my hands when I’m walking?

Do babies deprived of disco exhibit a failure to jive?

You know, sometimes the most “pointless” analyses turn up the coolest stuff.

Today I had…get ready for it…FREE TIME! So I decided to try analyzing a fairly large dataset using SAS (’cause SAS can handle large datasets better than R and because I need to practice my coding anyway).

I went here to get a list of the 5,000 most common words in the English language. What I wanted to do was answer the following questions:

1. What is the frequency distribution of letters looking at just the first letter of each word?

2. Does the distribution in (1) differ from the overall distribution in the whole of the English language?

3. Does either frequency distribution hold for the second letter, third letter, etc.?

LET’S DO THIS!

So the frequency distribution of characters for the first letter of words is well-established. Wiki, of course, has a whole section on it. Note that this distribution is markedly different than the distribution when you consider the frequency of character use overall.

I found practically the same thing with my sample of 5,000 words.

So this wasn’t really anything too exciting.

What I did next, though, was to look at the frequencies for the next four letters (so the second letter of a word, the third letter, the fourth, and the fifth).

Now obviously there were many words in the top 5,000 that weren’t five letters long. So with each additional letter I did lose some data. But I adjusted the comparative percentages so that any difference we saw weren’t due to the data loss.

Anyway. So what I did was plot the “overall frequency” in grey—that is, the frequency of each letter in the whole of the English language—against the observed frequency in my sample of 5,000 words in red—again, for the first, second, third, fourth, and fifth letter of the word.

And what I found was actually really interesting. The further “into” a word we got, the closer the frequencies conformed to the overall frequency in the English language.

The x-axis is the letter (A=1, B=2,…Z=26). The y-axis is the number of instances out of a sample of 5,000 words. See how the red distribution gets closer in shape to the grey distribution as we move from the first to the fifth letter in the words? The “error”–the absolute value of the overall difference between the red and grey distributions–gets smaller with each further letter into the word.

I was going to go further into the words, but 1) I left my data at school and 2) I figured anyway that after five letters, I would find a substantial drop in data because there would be a much lower count of words that were 6+ letters long.

But anyway.

COOL, huh? It’s like a reverse Benford’s Law.*

*Edit: actually, now that I think about it, it’s not really a REVERSE Benford’s Law; as I found when I analyzed that pattern, it too rapidly disintegrated as we moved to the second and third digit in a given number and the frequency of the digits 0 – 9 conformed to the expected frequencies (1/10 each).

Benford’s Law? More like Benford’s LOL

Okay, today’s going to be a quick little blog ‘cause I’m busy trying to organize/transfer/protect from any possible massive hard drive failures my music library. It’s stressing me out.

While I was working on the “References” section of a textbook today at work, I noticed a pattern that I’ve come in contact with several times: there appeared to be a lot more “entries” that started with a letter from the first half of the alphabet (A – M) rather than the latter half (N – Z). I’ve done at least one other analysis regarding this topic, but I decided to do another slightly different one to see if it applied in this case.

QUESTION OF INTEREST

So what is Benford’s Law? For those of you who don’t want to click the link (lazy fools!), Benford’s Law states that with most types of data, the leading digit is a 1 almost one-third of the time, with that probability decreasing as the digit (from 1 to 9) increases. That is, rather than the probability of being a leading digit being equal for each number 1 through 9, the probabilities range from about 30% (for a 1) to about a 4% (for a 9).

What I want to see is this: is there a “Benford’s Law” type phenomenon for the letters of the alphabet? That is, do letters in the first half of the alphabet appear as the first letter of words more often than letters in the latter half of the alphabet?

HYPOTHESIS

In a given set of random words, a greater number of words will start with a letter between A and M than with a letter between N and Z.

METHOD

Using this awesome little utility, I generated (approximately) 5,000 words each from The Bible, Great Expectations, and The Hitchhiker’s Guide to the Galaxy. I then counted how many words there were starting with A, how many words there were starting with B, and so on for each letter of the alphabet.

I then did two other breakdowns of the letters:

A) I divided the alphabet in half (A – M and N – Z) and counted the total number of words for each group.

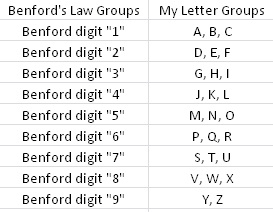

B) In order to “mirror” a sort of Benford’s Law type of structure, I divided the 26 letters into nine groups (eight groups of three letters each, one group of two letters). I wanted to make a similar breakdown of groups to the nine numbers that Benford’s Law applies to, just to see if that sort of arbitrary screwing around did anything. Visualization ‘cause I suck at explaining stuff when I’m in a hurry:

Kay? Kay.

RESULTS

I made charts!

By “half of the alphabet” in what is probably the most worthless visual ever:

By semi-arbitrary group (dark blue) with Benford’s percentages by number (light blue) for comparison:

DISCUSSION

Well, that whole thing sucked. Okay, so obviously it’s not a perfect pattern match and I didn’t do any stats (I WAS IN A HURRY) to see whether there was any statistical significance or anything, but it was fun to screw around with for an hour or so. I wonder how different the results would be (if at all) if I were to use truly random words from the English language, not just random words selected out of three works of fiction. Perhaps material for a later blog…?

END!

Artz n’ Letterz

So this is something I noticed a long time ago, but going through my playlists in iTunes this afternoon made the observation come to the forefront of my mind: when I sort my “Top Favorites” playlist by artist, I notice that a large amount of the songs (68%) are by artists whose names begin with a letter from the first half of the alphabet (A – M). When I sort my entire music library in this manner, I find the same proportion (okay, 67%…it’s pretty damn close). And you know what’s more interesting? If I sort by the TITLE of the song, I get the same proportion again! OOH, OOH, and sorting my freaking book list gives the same 67% as the music.

I find this quite fascinating. Has anyone else ever noticed this type of pattern in any of their things? It’s interesting to me that this 2:1 ratio keeps coming up. This requires exploration.

Hypothesis: this 2:1 ratio occurs because the first half of the alphabet contain more letters that appear more often as the first letters in English words.

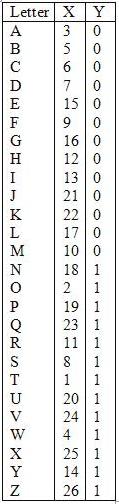

Method: utilizing letterfrequency.org, I found the list of the frequencies of the most common letters appearing as the 1st letter in English words*. I used this list as a ranking and, using a point-biserial correlation, correlated this ranking with a dichotomized list of the letters, in which letters in the first half of the alphabet were assigned a value of “0” and those in the second half of the alphabet were assigned a value of “1.”

Results: here are the two values being correlated alongside their respective letters:

Where the “X” column is ranking by the frequency of appearance as the first letter of a word and the “Y” column is a dichotomized ranking by alphabetical order. Point-biserial correlation necessary because one of the variables is dichotomous. So what were the results of the correlation? rpb = .20, p = .163.

Conclusion: well, the correlation isn’t statistically significant (p < .05) by a long shot, but I’ll interpret it anyway. A positive correlation in this case means that letters with the larger dichotomy value (in this case, those coded “1”) tend to also be those same letters with a “worse” (or higher-value) coding when ranked by frequency as the first letter in English words. So in plain English: there is a positive correlation between letters appearing in the second half of the alphabet and their infrequency as their appearance as the first letter in English words. In other words, letters appearing in the first half of the alphabet are more likely to appear as the first letter in English words. Not statistically more likely, but more likely.

Meh. Would have been cooler if the correlation were significant, but what are you going to do? Data are data.

*Q, V, X, and Z were not listed in the ranking, but given the letters, I assume that they were so infrequent as first letters that they were all at the “bottom.” Therefore, that is where I put them.

Scrabble Letter Values and the QWERTY Keyboard

Hello everyone and welcome to another edition of “Claudia analyzes crap for no good reason.”

Today’s topic is the relationship between the values of the letters in Scrabble and the frequency of use of the keys on the QWERTY layout keyboard.

This analysis took three main stages:

- Plot the letters of the keyboard by their values in Scrabble.

- Plot the letters of the keyboard by their frequency of use in a semi-long document (~50 pages).

- Compute the correlation between the two and see how strongly they’re related.

Step 1

There are 7 categories of letter scores in Scrabble: 1-, 2-, 3-, 4-, 5-, 8-, and 10-point letters. The first thing I decided to do was create gradient to overlay atop a QWERTY and see what the general pattern is. Here is said overlay:

Makes sense. K and J are a little wonky, but that might just be because the fingers on the right hand are meant to be skipping around to all the other more commonly-used letters placed around them. This was the easiest part of the analysis (except for making that stupid gradient; it took a few tries to get the colors at just the right differences for it to be readable but not too varying).

Step 2

I found a 50 or so page Word document of mine that wasn’t on anything specific and broke it down by letter. I put it into Wordle and got this lovely size-based comparison of use:

I then used Wordle’s “word frequency” counter to get the number of times each letter was used. I then ranked the letters by frequency of use.

I took this ranking and compared it to the category breakdown used in Scrabble—that is, since there are 10 letters that are given 1 point each, I took the 10 most frequently used letters in my document and assigned them to group 1, the group that gets a point each. There are 2 letters in Scrabble that get two points each; I took the 11th and 12th most frequently used letters from the document and put them into group 2. I did this for all the letters, down until group 7, the letters that get 10 points each.

So at this point I had a ranking of the frequency of use of letters in an average word document in the same metric as the Scrabble letter breakdown. I made a similar graph overlaying a QWERTY with this data:

Pretty similar to the Scrabble categories, eh? You still get that wonky J thing, too.

Side-by-side comparison:

Step 3

Now comes the fun part! I had two different ways of calculating a correlation.

The first way was the category to category comparison, which would require the use of the Spearman correlation coefficient (used for rank data). Essentially, this correlation would measure how often a letter was placed in the same group (e.g., group 1, group 4) for both the Scrabble ranking and the real data ranking. The Spearman correlation returned was 0.89. Pretty freaking high.

I could also compare the Scrabble categories against the raw frequency data, which would require the use of the polyserial correlation. Since the frequency decreases as the category number increases (group 1 has the highest frequencies, group 10 has the lowest), we would expect some sort of negative correlation. The polyserial correlation returned was -.92. Even higher than the Spearman.

So what can we conclude from this insanity? Basically that there’s a pretty strong correlation between how Scrabble decided to value the letters and the actual frequency of letter use in a regular document. Which is kind of a “duh,” but I like to show it using pretty pictures and stats.

WOO!

Today’s song: Sprawl II (Mountains Beyond Mountains) by Arcade Fire

Helvetica Headache

Well, this was going to be a small simple thing, but, as you know, that never is the case when I’m involved. So I now present to you a semi-objective ranking of the alphabet!

I decided that the letters would be judged according to six factors:

-Uppercase Aesthetic Value (visual) (UAV visual): aesthetic value based on visual appeal of uppercase letters typed in 40 pt. Arial.

-Lowercase Aesthetic Value (visual) (LAV visual): aesthetic value based on visual appeal of lowercase letters typed in 40 pt. Arial.

-Uppercase Aesthetic Value (written) (UAV written): aesthetic valued judged on ease* of written uppercase letters, in the style of Arial.

-Lowercase Aesthetic Value (written) (LAV written): aesthetic valued judged on ease* of written lowercase letters, in the style of Arial.

-Phonetic Aesthetic Value (PAV): aesthetic value judged on ease of spoken sound. Letters with multiple sounds had each sound ranked. The means of these rankings are reported.

-Aural Aesthetic Value (AAV): aesthetic value judged on appeal of spoken sound. Letters with multiple sounds had each sound ranked. The means of these rankings are reported here.

Here is the table of the rankings, followed by a column of the final ranked letters. Have fun (asterisks denote tied values)!