Do all path diagrams want to grow up to be models?

HI!

Remember this?

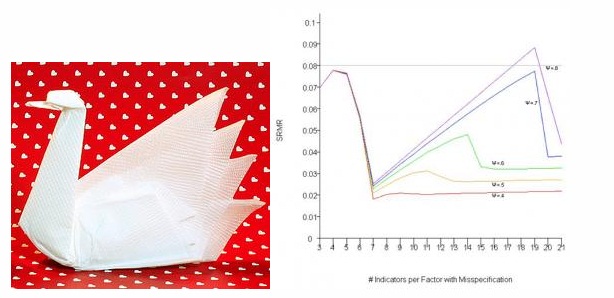

This is probably the strangest result I found while writing my thesis. I’ll explain what it’s showing ‘cause it certainly isn’t obvious from this graph (especially if you don’t know structural equation modeling, aka SEM) and then tell you why I’d like to study something like this in depth.

SEM 101!

SEM is basically the process by which researchers attempt to construct models of the relationships amongst variables that best fit a given data set. For example, if the data I’m interested in are a bunch of variables related to the Big Five personality factors and I as a researcher have evidence to support a specific structure of relationships amongst these variables and factors, I can construct a structural equation model that numerically represents how I think the variables are related. I can then test my model against the actual relationships amongst the variables in the actual data.

Fit indices, the whole topic of my thesis, are calculations which allow researchers to quantify the degree to which their hypothesized model accurately represents the real structure of the relationships amongst the variables in the data. Most fit indices range from 0 to 1, though the meaning of scores of 0 and 1 differ depending on the index. Model fit can be affected by a bunch of stuff, but most obviously (and importantly) it is affected by inaccuracies in the hypothesized model.

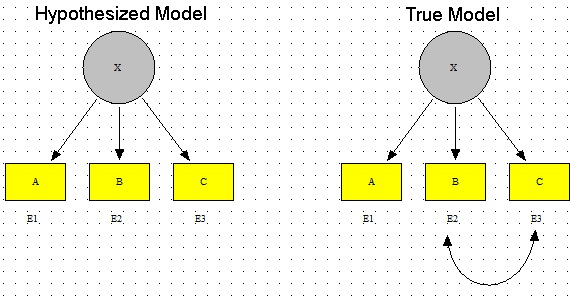

For example, say I had a model in which I had variable A, variable B, and variable C all related to factor X but all uncorrelated with one another (good luck with that setup, but it’s good for this example). I fit this to data which, indeed, has A, B, and C all related to X but also has B and C covarying via their errors. The fact that my model is missing this covariation would factor into the calculation of the fit index, lowering its value.

Got it?

Okay.

Without going into the gory details of how these simulations were constructed and what model misspecification we added so that the fit index would have a discrepancy to work with (that is, the proposed model in the simulations purposefully didn’t match the underlying structure of the data and thus would have a fit index indicating a certain degree of misspecification), I’ll tell you what we did for this plot. I’ll tell you as I describe the plot, actually, ‘cause I think that’d be easiest.

Recall from like 20 sentences ago: SEM is about creating an accurate representation of the real relationships that exist amongst a set of variables. This representation of the true relationships amongst the data (called the “true model”) takes the form of a researcher’s proposed model (called the “hypothesized model”). I’ve labeled the pic above appropriately.

For the plot at the beginning of this blog, there were actually 18 simulated models—each with two factors and 24 indicator variables. The only differences between each of these models was how many indicator variables loaded onto the two factors. For example, one model looked like this (click to make these pics bigger, BTW):

(Three indicator variables loading onto Factor 1, 21 indicator variables loading onto Factor 2)

And another model looked like this:

(An equal number of indicator variables (12) loading onto both Factor 1 and Factor 2).

For each model, all the errors of the indicators were uncorrelated except for V1 and V2 (indicated by the crappily-drawn red arrows). You don’t really need to know what that means to get the rest of this blog; basically all you need to know is that each of the models had one extra “path” (or relationship between variables) in addition to the relationship between the two factors and the 24 indicator-to-factor relationships. So for each model, there totaled a number of 26 pathways or relationships between variables.

Now remember, I said these were simulated models. These models are actually what the data I created are arising from. Hence, they can be considered in the context of SEM as “true models” (see above).

Okay, so we’ve got a bunch of true models. How in the heck do we assess the performance of fit indices?

Easy! By creating a “hypothesized model” that (purposefully, in this case) omits a pathway that’s actually present in the data arising from the true model. In this simulation, that meant that for each true model, there would be a hypothesized model created that would fit every path correctly BUT would omit the correlation between the errors for V1 and V2 (the red-arrow-represented relationship between V1 and V2 would not exist in the hypothesized model).

See what I’m getting at? I’m purposefully creating a hypothesized model that doesn’t fit the true model exactly so that I can analyze what fit indices appropriately reflect the discrepancy. Indices that freak out and say “OH YOUR MODEL SUCKS, IT’S TOTALLY NOT AN ACCURATE REPRESENTATION OF THE UNDERLYING DATA STRUCTURE AT ALL” would be too sensitive, as a model that accurately represents 25 out of 26 possible pathways is a pretty damn good one (and is almost unheard of in psychology-related data). However, an index that says, “Hey, you’re a pretty badass researcher, ‘cause your model fits PERFECTLY!” isnt’ right either; you’re missing a whole pathway, how can the fit be perfect?

ANYWAY.

Wow, that was like 20 paragraphs longer than I was expecting.

[INTERMISSON TIME! Go grab some popcorn or something. I’m watching Chicago Hope at the moment, actually. Love that show. Thank you, Hulu. INTERMISSION OVER!]

Back to the plot.

So now you know what the models were in this case, I can tell you that the x-axis of this plot represents the 18 different models I had created. You’ll note the axis label states “# of Indicators per Factor with Misspecification.” This means that for the tick labeled “3,” the correlated errors of V1 and V2 in the true model occurred under the factor with three variables (with the other factor, Factor 2, having the remaining 21 indicator variables loading onto it). The hypothesized model, then, which omits this relationship, looks like this:

On the opposite side of the plot then, the tick labeled “21” is opposite—the error covariance occurs between variables that load onto the factor with the 21 indicator variables loading onto it.

Make sense?

Probably not ‘cause I’m writing this at like 5 AM and sleep is for wusses and thus I haven’t been partaking in much of it, but I SHALL CARRY ON FOR THE GOOD OF THE NATION!

Remember, for each of the 18 true models, I fit a hypothesized model that matched the true model perfectly, except it OMITTED the error covariance occurring between two indicator variables.

Now let’s look at the y-axis, shall we? You’ll see it’s label reads “SRMR,” which stands for the Standardized Root Mean Square Residual fit index. This index, as can be seen by the y-axis values, ranges from 0 to 1. The closer the index gets to 1, the better the hypothesized model is said to fit the true model, or the true underlying structure of the data.

Okay, and NOW let’s look at the colored lines. The different colors represent the different strengths of correlation between the two factors in the model. But that’s probably the least important thing right now. So I guess just ignore them, haha, sorry.

Alrighty. Now that you (hopefully kind of sort of) mucked through my crappy, haphazard, rushed explanation of what this graph is showing, take a look at it, particularly at how the lines change as you move left to right on the x-axis.

Do you all see how weird of a pattern that is? This plot is basically showing me that the fit index SRMR is sensitive to misspecification in the form of an omitted pathway (relationship between variables), but that this sensitivity jumps all over the damn place depending on the size of the factor on which it occurs. Notice how all the lines take a dive toward a y-axis value of zero (poor fit) when there 7 indicators belonging to the factor containing the misspecification (and 17 indicators belonging to the factor without the misspecification). Isn’t that WEIRD? Why in the hell does that particular shaped model have such a poor fit according to this index? Why does fit magically improve once this 7:17 ratio is surpassed and more indicator variables per the factor with the error are included?* By the way, that’s this model:

Freaking SRMR, man. And the worst part of all this is the fact that this is NOT such an aberrant result. ALL of the fit indices I looked at (I looked at seven of them), at least once, performed really, really poorly/counter-intuitively.

This is why this stuff needs studying, yo. Also why new and better indices need to be developed.

Haha, okay, I’m done. Sorry for that.

*Actually this sort of makes sense—the more indicator variables there are loading onto the factor with the error, the more “diluted” that error becomes and it’s harder for fit indices to pick it up. However, there’s not really an explanation as to why the fit takes a dive UP TO the 7:17 ratio.