Week 34: The Within-Subjects Factorial Analysis of Variance

Today we’re going to look at a test similar to the one we looked at two weeks ago. Specifically, we’re going to look at the within-subjects factorial analysis of variance!

When Would You Use It?

The within-subjects factorial analysis of variance is a parametric test used in cases where a researcher has a factorial design with two* factors, A and B, and has a set of subjects that are measured on each of the levels of all of the factors. The researcher is interested in the following:

- In terms of factor A, in the set of p dependent samples (p ≥ 2), do the factor levels effect the variable of interest across the dependent samples?

- In terms of factor B, in the set of q dependent samples (q ≥ 2), do the factor levels effect the variable of interest across the dependent samples?

- Is there a significant interaction between the two factors?

What Type of Data?

The within-subjects factorial analysis of variance requires interval or ratio data.

Test Assumptions

- Each sample of subjects has been randomly chosen from the population it represents.

- For each sample, the distribution of the data in the underlying population is normal.

- The variances of the k underlying populations are equal (homogeneity of variances).

Test Process

Step 1: Formulate the null and alternative hypotheses. For factor A, the null hypothesis is the claim that the mean of the subjects’ scores across the different levels are equal. The alternative hypothesis claims otherwise. For factor B, the null hypothesis is the claim that the mean of the subjects’ scores across the different levels are equal. The alternative hypothesis claims otherwise. For the interaction, the null hypothesis claims that there is no interaction between factor A and factor B. The alternative claims otherwise.

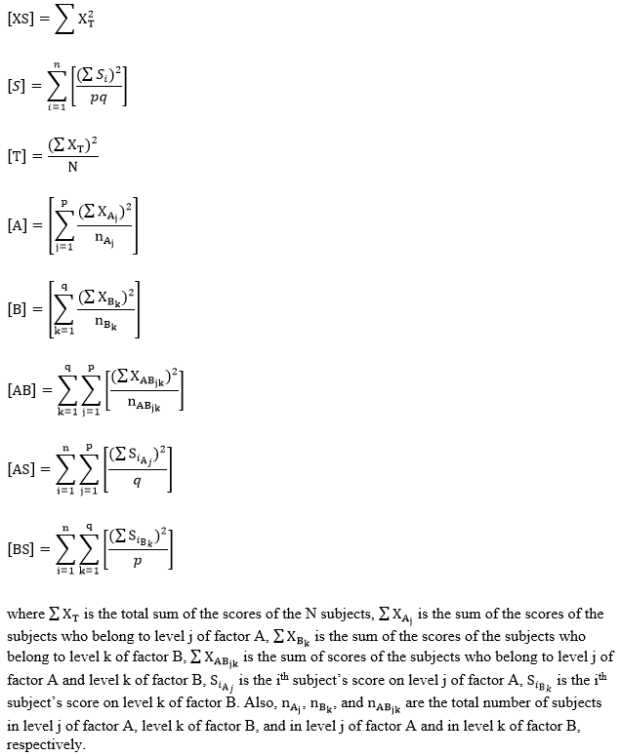

Step 2: Compute the test statistics for the three hypothesis. To do so, we must find SSA, SSB, and SSAB. First, find the following values:

Then, find the SS values as follows:

Then find the MS values:

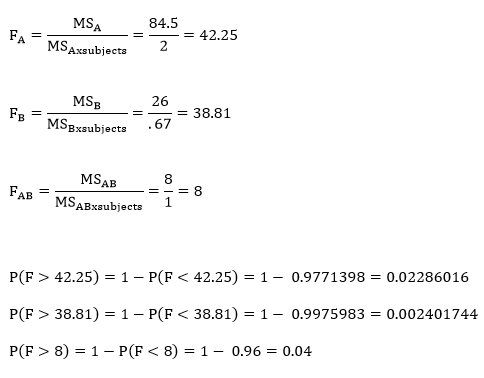

Finally, compute the three test statistics, F-values, for factor A, factor B, and the interaction.

Step 3: Obtain the p-value associated with the calculated F statistics. The p-value indicates the probability of the ratio of the MSA, MSB, or MSAB to MSWG equal to or larger than the observed ratio in the F statistics, under the assumption that the null hypotheses are true.

Step 4: Determine the conclusion. If the p-value is larger than the prespecified α-level (or the calculated F statistic is larger than the critical F value), fail to reject the null hypothesis (that is, retain the claim that the population means are all equal). If the p-value is smaller than the prespecified α-level, reject the null hypothesis in favor of the alternative.

Example

I don’t have a good example of my own for a within-subjects factorial analysis of variance, so I figured I’d use the example from the book! An experimenter employs a two-factor within-subjects design to determine the effects of humidity (factor A, two levels) and temperature (factor B, three levels) on mechanical problem-solving ability.

Here, n = 18 (three subjects across 2 x 3 different conditions) and let α = 0.05.

H0: µlowhumidity = µhighhumidity

Ha: the means are different

H0: µlowtemp = µmodtemp = µhightemp

Ha: at least one pair of means are different

H0: there is no interaction between humidity and temperature

Ha: there is an interaction between humidity and temperature

Computations:

Since all of these p-values are smaller than our α-level of 0.05, we would reject the null hypothesis in all three cases.

Example in R

x=read.table('clipboard', header=T)

attach(x)

fit=aov(score~humidity+temp+humidity:temp)

summary(fit)

*This test can be done with more factors, but for now, let’s just stick with two.