Today I want to talk about binomial random variables. Specifically, I want to talk about how you can “derive” the binomial probability mass function (pmf) using a simple example.

I want to discuss these things in a way that someone who is completely unfamiliar with statistics can understand them, so let’s start from the beginning!

- What is a binomial random variable?

- What is a probability mass function?

- What is the probability mass function for a binomial random variable?

- How can you “derive” this probability mass function from a simple example?

- What is a binomial random variable?

You can think of a binomial random variable as something that counts how often a particular event occurs in a fixed number of trials. In order for a variable to be a binomial random variable, the following conditions must be met:

- Each trial must be performed the same way and must be independent of one another

- In each trial, the event of interest either occurs (a “success”) or does not (a “failure”) (in other words, there must be a binary outcome in each trial)

- There are a fixed number, n, of these trials

- In each of the n trials, the probability of a success, p, is the same.

Here are some good “basic” examples of binomial random variables:

- Define a “success” as getting a “heads” on a coin flip. If you flip 10 coins and let X be the number of heads you get from those 10 flips, X is a binomial random variable (n = 10, p = 0.5)

- Define a “success” as rolling a 5 on a 6-sided die. If you roll a die 20 times and let X be the number of times you roll a 5, then X is a binomial random variable (n = 20, p = 1/6)

- The probability of any given person being left-handed is 0.15. If you randomly ask 50 people if they are left-handed or not, and let X be the number of people who are left-handed out of the 50, then X is a binomial random variable (n = 50, p = 0.15).

As you can see, there are really two values we need to know in order to define something as a binomial random variable (which, in reality, is defining the shape of the binomial distribution from which that random variable comes): n, the number of trials, and p, the number of successes. If X is a binomial random variable, we can express this as X~binom(n,p). This is read as “X follows a binomial distribution with n trials and a probability of success p.” In our previous three examples, we could express the X’s as follows:

- X~binom(10,0.5)

- X~binom(20,1/6)

- X~binom(50,0.15)

- What is a probability mass function?

A probability mass function (pmf) is a lot less scary than it sounds. It is simply a function that gives the probability that a (discrete) random variable is exactly equal to some value. For example, suppose X is our random variable. Let x represent a specific value that X could assume. A pmf for X could give us P(X = x), or the probability that X is equal to that specific value x.

- What is the probability mass function for a binomial random variable?

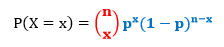

Let X be a binomial random variable. To find the probability that X equals a specific value x, we use the following formula:

where n is the number of trials, p is the probability of success, and

- How can you “derive” this probability mass function from a simple example?

This pmf might look a little complicated. However, we can understand it and how it comes about by looking at a simple example and “working backwards” to get to the above pmf formula. So let’s do it!

Suppose you roll a fair die four times. Let X be the number of times you roll a 1. You want to find the probability of rolling exactly three 1s.

In this example, the number of trials is the number of rolls. So n = 4. The probability of success is the probability of rolling a 1 on any given roll. So p = 1/6. And what we want to find is the probability that exactly three of the four rolls will result in a success. So we want P(X = 3).

Let’s see if we can figure this out without the formula.

We want the probability of getting exactly three successes out of the four rolls, or P(three successes). Another way of stating this is that we want P(success and success and success and failure), or that we want three successes and one failure. Don’t worry about the order yet; we’ll deal with that later.

So. We know that the probability of success on any one roll is 1/6, which means that the probability of failure on any one roll is 5/6. We also know that the rolls are independent of one another, as the outcome of one roll does not affect the outcome of another (e.g., rolling a 5 on the first roll will not affect the outcome of the second roll).

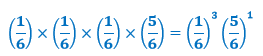

Because of this independence, our calculation of P(success and success and success and failure) is just going to be the probabilities of each of the four outcomes multiplied by each other, or

In our example, this is

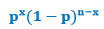

Notice that this is the

part of the pmf formula when x = 3 and n = 4. This is the part that gives us the probability of three successes out of four trials. However, we’re not done yet! We also need to take into account the fact that these three successes and one failure can happen in different orders. Specifically, if we denote a success as “S” and a failure as “F,” these can occur in the following orders:

SSSF

SSFS

SFSS

FSSS

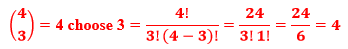

That is, there are four different ways we can get three successes and one failure when n = 4. So what we need to do now is combine this with our previous calculation above to get

That 4 is actually the number of combinations of three successes and one failure we can get when n = 4. Another way we could calculate this without writing out all the possible combinations? Use this formula:

Specifically:

Our final calculation, then, is

So the probability of rolling exactly three 1s out of four rolls is 0.01543, and we can see that in our process of figuring this out, we’ve actually “derived” our binomial formula of

Cool, huh?